This video is no longer available.

The Cleaners

11/12/18 | 1h 26m 11s | Rating: TV-MA

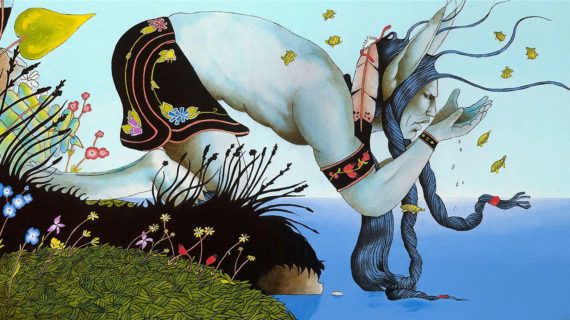

Social media sites have been under intense pressure to monitor and delete offensive, pornographic, and incendiary posts. Compassionately portraying the Filipino workers who comb through thousands of online images in the dark of night, The Cleaners exposes the dark side of information technology.

Copy and Paste the Following Code to Embed this Video:

The Cleaners

Delete. Delete. Ignore.

female narrator

They work in secret, reviewing the most explicit social media posts from around the world. There's this code of silence in the Silicon Valley. The companies have more and more power to decide what must be taken down. There are tens of thousands of people who are doing this work.

narrator

From every suspicious word... Our job is not easy. - Delete. To every gruesome moment. Delete. - Ignore. Filmmakers Hans Block and Moritz Riesewieck expose the shadowy figures employed to censor our Internet. That outsourcing should be, really, quite disturbing to people in democratic societies. And examine how our lives have come to depend on them. If you commit one mistake, you could ruin more than one life. Ignore. It could trigger war. "The Cleaners," now only on Independent Lens.

upbeat pop music

woman vocalizing

narrator

Delete. Ignore.

dramatic music

narrator

static crackles

keys sounding

narrator

Ignore. Delete. Delete. Ignore. Ignore.

static crackles

suspenseful music

narrator

We stand for connecting every person for a global community, for giving everyone in the world the power to share anything they want with anyone. Mark Zuckerberg is now the front-page editor for every newspaper in the world, effectively. Delete. Delete. Social media platforms, they need and want to maintain complete control over what goes on. Facebook has a bigger population than any state in the world, and so, when it acts as censor, it is as powerful as a state. Ignore. Ignore. If I didn't have social media, I wouldn't be able to get the word out. I probably wouldn't be standing here, right? I probably wouldn't be standing here right now. Delete. Delete. There are tens of thousands of people who are doing this work. All of it's done in secret, it's done by unknown people, and they're actually taking major editorial decisions.

explosion booms

narrator

Delete. Ignore. Delete. Delete. Ignore. - Ignore. Delete. - Ignore. Delete. Delete. Delete. The reason why I speak to you, because the world should know that we are here. There is somebody who's checking the social media. We are doing our best to make this platform safe for all of them.

static crackles

dramatic music swells

keys sounding

stirring piano music

narrator

siren wails distantly

narrator

horns honking

electricity buzzes

speaking English

narrator

-

speaking foreign language

narrator

-

speaking foreign language

narrator

mouse clicking

siren wails distantly

mouse clicking

narrator

-

speaking foreign language

narrator

children shouting

engine roaring

narrator

I started at Google in 2004. It has been a privilege to be part of building the infrastructure of the world we're now living in. To be part of something that so clearly is powerful with the potential to really change lives for the good, that's an amazing thing and a privilege to be a part of. You start with the question, what's the vision for what should be on your platform? What isn't appropriate? What don't you want in your community? And there are choices in that, right? Like, there is a choice to say, "I'm going to not let anything go up before I review it." That's a choice. Or, "I'm gonna permit most things and only review the things that are complained about." That's also a choice. When your value is around allowing more expression, allowing this sort of democratized platform to really live, then I think you go with the more... liberal policies.

static crackles

narrator

Do you solemnly swear that the testimony you're about to give is the truth, whole truth, and nothing but the truth? Yes. - I do. Thank you very much. Nicole Wong, who is the associate general counsel and chief privacy officer for Google-- Mrs. Wong, our staff went on the Internet, and they put in "preteen" plus "sex" plus "video." If you just looked at the Google sites, I mean, it looks like a hardcore pornography-- I mean, just "sex games" and "preteen sex" and "teen porn." Mister Chairman, we have multiple layers, two review--it's terrible that these sites are there, and we should-- Well, I think we all agree, but it looks like your company's doing the least to try to block it or to stop it. That's, I guess, what I'm trying to get at. Yeah, and I think, in addition to the levels of review that we try to do to take it out, we also have the biggest search engine on the Internet. We have many billions of pages-- So it should be easier to find it then. Should be easier to find it, so I would think you'd have more ways to block it than the others if you have the biggest search engine. We have the biggest search engine with many more pages to review, and we have our own search quality engineers who are trained to look for and remove these types of sites. We do the best we can. Okay.

siren wailing in the distance

narrator

When we started training, I didn't know what content moderator was. I had no idea, and that was the first time I heard about it. They explained to us what are we gonna see, what are the tools that we're gonna use, and what should we expect in this job. I remember feeling that I wanted to quit already. The most shocking thing that I saw, when I was a content moderator, back then, is that a kid, sucking a dick, inside a cubicle, and the kid was, like, really naked. It was, like, a girl around six years of age, and he was sucking a dick, and another picture taken was-- the child was actually on the bed, and he-- and she has a cum shot in here, which is really--I don't know, unforgivable for me to see, so I went straight to my team leader and told him that I can't do this. I really can't do this. I can't look at the child. But then he told me that I should do it because it is my job and I signed a contract for it.

dramatic music

narrator

When you open the floodgates and you ask the entire world to broadcast yourself, upload your life, share everything that you can think of to share, people respond. People with all sorts of motivations and interests and desires respond. When Facebook or Google claim that they don't have any employees in Manila, they can legitimately do that by using the labor of a third-party outsourcing firm. It's true that the paychecks don't come from Google or Facebook. They come from an outsourcing firm based in the Philippines. Commercial content moderators labor in the shadows of social media platforms, out of sight and out of mind, actually unknown to the millions of users of the platforms who've never given it much of a thought who does the cleanup work on their platforms.

gun popping

narrator

-

speaking foreign language

narrator

Whoo! Oh, my God.

melancholy music

narrator

-

speaking foreign language

narrator

-

speaking foreign language

rooster crows

narrator

Delete. Ignore. Delete. Hmm. Mm... All right, safe to delete. Delete. Ignore.

gasps

narrator

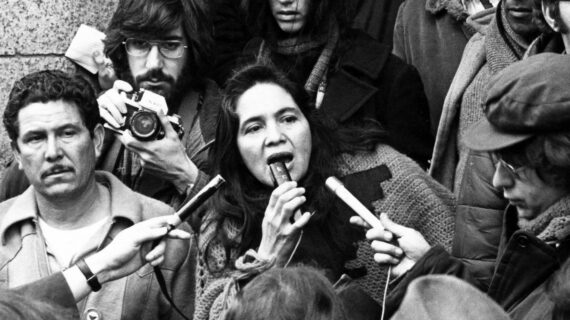

Oh, it's delete. Ah, delete. Delete. Mm. January 2016, I painted a piece of Donald Trump with a small penis called "Make America Great Again," and it went viral, and millions of people shared it over-- across many social platforms, and, a few days later, he mentioned his penis size on a debate, and I remember my friend saying-- she called me and she was like, "Did you just make Donald Trump talk about his penis size on a debate?" And he referred to my hands, "If they're small, something else must be small." I guarantee you there's no problem. I guarantee it. All right.

laughter

narrator

I posted it on my Facebook page, and, within three days, it had something like 50 million shares and-- over so many social platforms. It just went crazy, and I had no idea it would go that far. Mm-hmm.

speaking English

narrator

speaking foreign language

mass singing indistinctly

narrator

My Facebook page was shut down, and everything I had, every account I had was shut down, and so all I had is my social accounts, and I can't tell anyone what's going on or what's happening. Facebook says fine art is okay. Sure, you could consider it porn, but when it comes to art, it's a representation of that. It's not actually real. It's not a photograph. It's not someone who hasn't consented. It's just a fictional piece. Again, it's not violent. It's not sexual. It's just a naked human body with Trump's face on it. I just don't understand why people think someone naked is so disgusting.

keyboard clicking

narrator

static sizzles

narrator

Mr. Colin Stretch, general counsel for Facebook, Mr. Sean Edgett, acting general counsel for Twitter, and Mr. Richard Salgado, the director of law enforcement and information security at Google. Millions of Americans use your technology to share the first step of a grandchild, to talk about good and bad things in our lives, and I would like to say to all of you, you've enriched America, but the bottom line... is these technologies also can be used to undermine our democracy and put our nation at risk. Do you all agree it's bad for business if the American public perceives you as being able to have your platforms hijacked by terrorists to radicalize Americans? It's all bad for business, right? It's beyond bad for business, Senator. There's no place for terrorism on Facebook. We would agree with that. I agree with that proposition. Uh, I'd like to ask some questions. It would seem to me that there's a pretty big distinction between potentially jihadi accounts-- there's a whole range of theological interpretation about people who don't quite believe in violence in the name of religion and people who do. The intricacies of jihadi theology is not something that an engineer is exactly trained to do, so who are your content experts by domain? How do you hire for that? So we have thousands of people who-- who, as part of their job on a regular basis, are attempting to keep terrorism off of Facebook. We have 150 people who do nothing else. How many people do you have, Mr. Edgett, at Twitter? Well, we harness the power of both technology algorithms, machine learning, to help us and also a large team of people that we call our Trust & Safety team and our User Services Team. It's hundreds of people. What about you? - Internally, we'll have thousands of people working on them. We also get a good deal of leads on content that come from outside the company. So, in view of that, Mr. Stretch, do you think 150 people is enough people? Senator, to be clear, we have about 10,000 people who are working on safety and security generally, and we're committing to investing more and doubling that number by the end of 2018. Hmm. Delete. Delete. Delete. Ignore. Delete. Delete.

mouse clicking

narrator

Delete.

speaking English

narrator

Yeah.

explosions booming

man speaking foreign language

man shouting indistinctly

narrator

Whenever we see a photo or a video on social media, we archive it immediately because we know, later, it might be taken down. It's very important for us to document these photos because we might not have this photo again. Pretty much everyone in Syria has a cell phone now, and pretty much everyone is an activist. They will document every alleged air strike, the aftermath of the air strikes, victims, destruction, whether this is done by the coalition, by Russia, the Syrian regime. They will collect all these videos, and they would upload them on their account. After we have collected the data, we try to geo-locate it. We'll try to find out the exact place, and we'll publish it online. We help paint a picture of the war in Syria. So this is the school. - Yeah. And the air strike was in the school area, so the neighborhoods around it. Without our work, the armies would have, or the government would have, a straight pass without anybody challenging them. There would be, I think, more civilians getting killed. There would be less closure. We would not be able to know what's going on. These videos are needed. These videos are part of the war. They provide information. They provide evidence for the future. But the problem is, these videos are quite often classified as ISIS videos. It's been seen that this is very graphic, so they get deleted. Now, the censorship that's taking place on YouTube, it's affecting a lot of organizations with a lot of videos about Syria and air strikes and destruction, and they had their accounts suspended.

phone ringing distantly

narrator

Our life is different from here. We have war. That's a very important reason why the content is different. The war and air strikes and killing, that what we would focus on. That's what we are gonna report about. We wouldn't report about football activities or, yeah, "Let's go to this place for brunch."

chuckles

narrator

No, unfortunately not.

indistinct shouting

shouting continues

mouse clicks, audio stops

narrator

A decision about what constitutes terrorist content is a really context-based decision. It may show up on one platform as a true threat, and it may show up on another platform as news or as a satire, right, or criticism commenting on a piece of content, and so I think that so much is taken about the context around a piece of content, it's hard to know whether something should be removed or not. You know, think about that global workforce, right, and they may be reviewing content coming from a country they have never been to with no historical grounding in what they're seeing. It's really, really hard for some of these difficult pieces of content.

speaking foreign language

narrator

-

speaking English

narrator

-

speaking foreign language

printer whirring

narrator

It's weird to be here physically in Berlin while emotionally and mentally completely there in Syria... just watching the war from a distance.

minimal piano music

narrator

I have this guilt feeling of being the survivor. I decide to post these photos on my Facebook wall, and then Facebook took it down after three days. There was no explanation. I tried to, like, in every possible way to be in touch, but it was impossible, and then to be able to show these photos, I decide to work out and peel out the skin of the photo where I take the victim out of the picture so you can actually show these photos without using this excuse that it's not proper to show. Sometimes, I try to think about it. Who decides what is the policy of Facebook? I would love to meet these people. I would love to-- like, know who they are, because, by logic, their background, who they are, it affects how they think. That child didn't die out of a normal situation. It was caused by the whole international game. So giving them a very loud voice, as I am, yeah. Yeah, definitely. We should keep disturbing the world. Annoy in a good way. The companies have more and more power to decide what can stay up and what must be taken down. They take advantage of our desire for ease, our resistance to effort, our resistance to challenge, and I think, over time, if we're not already there, it will interfere with our ability to have critical thinking. It interferes, potentially, with our ability to be challenged. To the extent that we're limiting expression, we're also limiting challenge. We're limiting effort. People shouldn't be surprised if, in the future, there's less information available to them. Less edgy, less provocative information available online, and I think that we will be a poorer society. We'll be poorer societies for it.

goofy arcade sounds

narrator

-

speaking English

narrator

horn honks

narrator

siren wailing, horn honking

narrator

-

speaking English

speaks foreign language

narrator

-

speaking foreign language

upbeat dance music

woman singing indistinctly

narrator

-

speaking foreign language

narrator

-

speaking English

cheers and applause

speaking indistinctly

narrator

Have you seen her pictures? You've seen her pictures online? Her sexy pictures? Before, her Facebook page was full of these things before she became political. Now, one time during-- this was during the time when the president still had this media blackout. He refused to give interviews to the media. He gave Mocha Uson an "interview." -

speaking English

narrator

And in that interview, Mocha Uson was fawning all over the president, expressing her support for the president, and calling for a boycott of media. So, I wrote a post on my Facebook, and I said that, "It's fascinating "that now a meeting between a president and an avid supporter is branded as journalism." I've had people tell me to buy a bulletproof vest. I've had people tell me that they would blow my head off. I have had people tell me that they would bury me alive. I've been called corrupt. I've been called biased. I've been called a prostitute. The thing here with Facebook is that...

sighs

narrator

It's so much easier to fall through the trap of taking sides, because it's so easy to publish, just post and click. People can be very emotional, and people can be easily carried away with quick snippets of information that makes you think that you understand. This is why, for example, we have a Trump, or this is why we have a Duterte, or this is why we have Mocha Uson. The danger is that we might lose democracy because we're willing to give it up.

car engine revs

dramatic music

narrator

There's this code of silence in the Silicon Valley. Everything is always presented in a very varnished, manicured way. Everyone's killing it. Everything's going great. Every plot is up and to the right. Negatives in general are just not talked about. At least when I was there, the workforce at Facebook was very young, and if you're young, you don't really have draws on your time. You don't have a wife, you don't have kids, whatever, right, and so there's a lot of people who kind of semi-lived at Facebook. You were encouraged to hack the world, however possible. Facebook is fundamentally a tech company, right? It's run by engineers. It thinks about product. It thinks about infrastructures. It thinks about scale. It thinks about the user experience. It's not a media company, it doesn't think about content or content creation or content editing or curatorship. It is just not part of its DNA. Whenever Facebook has been confronted with, "Hey, you have a responsibility to this thing," their response is, "Eh, we just do math. "We have this algorithm, and we give you what you want, and we edit almost nothing." But, you know, the political reality is, after Trump, after Brexit, that answer kind of isn't quite good enough anymore. And yet, I think we're reaching the point where the public just won't accept that as a reality, and at some point, the government will step in, right, and I think they understand that. There have been press reports that Facebook may have potentially developed software to suppress posts from appearing in people's news feeds in specific geographic areas. Is that accurate? Has Facebook developed software to suppress posts from appearing in people's news feeds in specific geographic areas? We do have many instances where we have content reported to us from foreign governments that is illegal under the laws of those governments, so we deploy what we call geo-blocking or IP blocking so that the content will not be visible in that country but remains available on the server-- So, for example, if criticizing a government is illegal in that country, you have the capability to block them from criticizing the government and thereby gaining entry into that country and being allowed to operate. So, in-- in the vast majority of cases where we are on notice of local-- locally illegal content, it has nothing to do with political expression.

indistinct shouting

sirens wailing

narrator

Facebook removes everything and anything from their social media platform when Turkish authorities ask them to do so. Political speech or content of a political nature is, in most cases, the main subject for these removals. I'll show you some examples in terms of what has been subject to blocking or removal. Photos. Burning the Turkish flag. A Kurdistan map. Caricatures or cartoons mocking Erdogan. Whichever platform you look, whether it's YouTube, whether it's Facebook, whether it's Twitter, Turkey is the country which restricts more than anyone else across the globe. Turkey has always been a country of censorship. The traditional media is increasingly controlled by the government, so Turkish people have been turning to social media platforms. But now they're constantly pressured by the Turkish authorities to remove or block content. These companies started to do deals with the government authorities because none of these platforms want to be blocked completely from Turkey, because then they would be losing users and business. Delete. Delete. Delete. Delete. Delete. YouTube was so visual and was able to cross boundaries, so, around 2007, we started to experience a lot of blockages. So, when a government doesn't know how to reach you, they just turn you off in their country. It took us a while to figure out what it was they were upset about. We finally managed to get ahold of the specific videos that they were unhappy with, some of which were about Ataturk, some which they claimed were terrorist videos that, again, violated Turkish law, and so I remember being up at, like, 2:00 in the morning one night, reviewing 67 videos that the government was upset with, and then made decisions from there about what to remove and what to keep up. There was a point at which, for some videos which weren't gonna get removed from the service generally because they didn't violate our community guidelines but were illegal in Turkey, that we decided to block by the IP address for Turkish users so that users in Turkey would not be able to see those videos. I did not love that resolution, but that's where we got to. These are really backroom deals that the public doesn't have a lot of information about. When these governments, in a kind of back-channel way, go to the companies and say, "We want you to restrict this, this expression on your platform," it will push companies over time to make these decisions on their own, and they have to make decisions about what the law means so that the companies are deciding what's lawful and what's not, what's illegal and what's legitimate, and that outsourcing to companies, I think, should be really quite disturbing to people in democratic societies.

horns honking

narrator

The nature of the job itself, you are not allowed to commit one mistake. If you commit one mistake, you could ruin one, more than one life. It could trigger war. It could trigger bullying. It could trigger killing one's life. So the opinions or the thinking of the users of the app depends upon the content moderators. Our job is not easy as what you think. -

speaking foreign language

narrator

-

speaking English

narrator

When people say hate speech isn't free speech or free speech isn't hate speech, they are completely wrong because the First Amendment is specifically designed for the things that you don't want to hear. I've been an upstart forever, man, or I've been, like, the

bleep

narrator

kicker for a long time, and I'm gonna be

bleep

narrator

kicker until I die. Platforms like Facebook, it's available. It's accessible. You just need to be crafty. With social networking, like on YouTube or Facebook or whatever, you just have to have the skills enough to shoot something and upload it. I believe, the whole refugee thing, you just don't open the gate and just let a bunch of people in, because it's like, you find out that a lot of them have diseases, a lot of them are criminals, a lot of them are rapists. The minute a Sharia police walks up to my wife and says, "Excuse me," whatever, I'm gonna knock the

bleep

narrator

out of him. It's like, "

bleep

narrator

you, man. "Carry your goat

bleep

narrator

ass back to whatever fifth-world

bleep

narrator

hole you came from."

whistle blows

indistinct chatter

narrator

I've been working, me and my family, all the time!

indistinct shouting

narrator

indistinct chatter

narrator

This is Sabo. I'm signing on from Berkeley. It's good seeing all the patriots and all the people on the right that I saw here. There's a huge crowd of amazing people down the street. There's still a pretty big police presence here. I'm kind of curious how long they're gonna be around, because I know as soon as they go, it wouldn't surprise me if people start fighting. Your'e a coward behind the camera! Shut up! The United States is so politically polarized that, I mean, someone from the sort of blue end of the spectrum can't even have a conversation with someone from the red end of the spectrum. And, I mean, there's lots of reasons for that. I don't think Facebook created this, but I think Facebook definitely amplifies it and makes it worse. You know, it used to be the case that every citizen had a right to an opinion, and now every citizen has a right to their own reality, right, to their own truth, to their own set of facts, and, you know, Facebook very intentionally flatters those sets of facts by showing you, you know, what you want to hear, effectively, and effectively filtering out what you don't want to hear, by and large, and so I think, to the degree-- you know, democracy requires that we actually have a common set of both rules of behavior and also ground truth that we sort of debate around, right? And if you don't have rules of behavior, due to whatever reason, you don't have a basic ground truth, then I think democracy becomes a little impossible. Can you reflect back on what he's saying? Why did I come here? I have no idea why I even came to speak. You know, I have a question. No, no, no, that's not this process. Feed back to him what he said. I don't remember! - Okay. Tell him what you understand about what he was saying. Do I look white? - Hold on. Hold on. Hold on. Hold on. Hold on. Try to listen. - So listen. Okay? Listen, man. Why are you yelling at me? You're talking. - You're preaching to me. You are talking. - No. Tell me what I'm saying to you. You're saying-- Tell me what I'm saying to you. I think you're frustrated. We need a man who-- Yes, and what did I say to you? What did I say to you? You're yelling at me! What am I saying to you? Standing up against white supremacy... Some leftist thinks you're being politically incorrect. We're the ones with the guns, the bullets, and the training, and as soon as we can start shooting, believe me, all that

bleep

narrator

gonna go away really fast.

whistling in the distance

narrator

gunshots, shouting

narrator

Who's running now, bitch?

indistinct chatter

narrator

Hey, Nazi

bleep

narrator

. Hey! Hey!

indistinct shouting

narrator

I don't know that we can place all of the blame and responsibility for hatred in our world on the social media platforms, but where I think that the... new tech companies need to really reflect is whether they promote that type of engagement, whether they have built systems that encourage and accelerate the most outrageous and offensive statements rather than the ones that will create more understanding.

indistinct shouting

narrator

Chill the

bleep

narrator

out!

shouting continues

narrator

Well, one of the misconceptions is that human nature is human nature, and then technology is just this neutral tool. It's not amplifying anything. But this is not true, because technology does have a bias, and that bias that it's-- it has a goal. It's actually seeking a goal, and it's-- the goal it's seeking is, what will get the most number of people's attention? What tends to work on billions of people at successfully extracting their attention out of them and keeping it, and not just getting attention but then getting them to share things? And so it turns out that outrage is really good at doing that. Whether Facebook wants to or not, they actually benefit at getting more attention when they show feeds that are filled with outrage versus if they said, "Let's not show those things." It amplifies that which is most divisive, that which is most outrageous, that which is most fearful. A whole environment tuned to offer us the worst of ourselves. I'll start with you, Mr. Stretch. Facebook's fastest-growing markets are in the developing world. For example, Facebook is being used today as a breeding ground for hate speech against Rohingya refugees in Myanmar. These are especially vulnerable people. They're being violently persecuted. The leadership in that country is not doing a darn thing. What are you doing to make sure it's not used to undermine nascent democracies, especially in the undermining-- it's not losing votes, it's losing lives? Senator, thank you for the question. We do have an obligation to make sure that it is not misused, and the way-- Understand, we're talking about lives. I--Senator, I don't disagree, and we do believe we have a role to play in raising visibility but, at the same time, not be used as a tool to, for example, foment hatred or glorify violence in any way. -

speaking English

narrator

indistinct shouting

speaks indistinctly

woman speaking foreign language

sobs

narrator

-

speaking English

indistinct shouting

narrator

What's happening with the Rohingya genocide in Burma is obviously-- this is just one of so many social externalities that are being created by a few platforms that are made right here in California by a handful of people who look kind of like me, you know, young white guy, you know, engineers, whose decisions, no matter how thoughtful or conscious or less conscious they are, will impact what 2 billion people are thinking. You know, the people in Burma have no accountability loop. They can't say there's a pothole on the street called "this fake news that's leading to this genocide." They're inhabiting this environment, living in Facebook every day, and they're spotting this pothole called "fake news," which is feeding genocide, and they have no way to report that and get it actually taken care of. We ought to be really careful about the thing we've built.

indistinct shouting

siren chirping, woman speaking indistinctly

woman wailing indistinctly

narrator

please no, don't let him be gone -

speaks foreign language

narrator

dog barking

narrator

During my time, there was the case of the one who committed suicide. I was responsible in conducting and diagnosing anything that had something to do with mental health. This guy who committed suicide has been in the company since the very start. I saw in his eyes at the time that I was talking to him that he is very sad. I ask him if I can help him, but he never really admitted that he has a problem. He hanged himself in his house at the front of his laptop. With a rope... on his neck. And with the laptop at his front. My disappointment is that I was not able to help him. Three times he already informed the boss, the operations manager, to please transfer him, and if he complained already that he wanted to be transferred, the management should do something about it. Maybe this is a cry for help already.

woman speaking foreign language

narrator

I'm different from what I am before. It's just like a virus in me, wherein it's slowly penetrating in my brain, and the reaction of my body is like I'm working as a moderator day-to-day, and then I quit. I need to stop. There's something wrong happening. I suddenly asked myself, "Why am I doing this?" Just for the people to think that it's safe to go online. When, in fact, in your everyday job, it's not safe for you. We're enslaving ourselves. We shouldn't wake up and be okay with that, because it's not okay. We should wake up to reality.

birds calling

narrator

children shouting

thunder rumbling

narrator

It takes courage to choose hope over fear. To say that we can build something and make it better than it has ever been before. You have to be optimistic to think that you can change the world. We do it one connection at a time. One innovation at a time. Day after day after day. And that's why I think the work that we're all doing together is more important now than it's ever been before.

applause

narrator

Ignore. Ignore. Delete. Delete. Ignore. Ignore. Ignore. Ignore. Ignore.

dramatic music

narrator

"Raw" by Paradox Paradise

narrator

mellow music

narrator

PBS Your Home for Independent Film

Search Episodes

Related Stories from PBS Wisconsin's Blog

Donate to sign up. Activate and sign in to Passport. It's that easy to help PBS Wisconsin serve your community through media that educates, inspires, and entertains.

Make your membership gift today

Only for new users: Activate Passport using your code or email address

Already a member?

Look up my account

Need some help? Go to FAQ or visit PBS Passport Help

Need help accessing PBS Wisconsin anywhere?

Online Access | Platform & Device Access | Cable or Satellite Access | Over-The-Air Access

Visit Access Guide

Need help accessing PBS Wisconsin anywhere?

Visit Our

Live TV Access Guide

Online AccessPlatform & Device Access

Cable or Satellite Access

Over-The-Air Access

Visit Access Guide

Passport

Passport

Follow Us